Hybrid Deep Learning Gaussian Process for Diabetic Retinopathy Diagnosis and Uncertainty Quantification

July 29, 2020

Abstract

Diabetic Retinopathy (DR) is one of the microvascular complications of Diabetes Mellitus, which remains as one of the leading causes of blindness worldwide. Computational models based on Convolutional Neural Networks represent the state of the art for the automatic detection of DR using eye fundus images. Most of the current work address this problem as a binary classification task. However, including the grade estimation and quantification of predictions uncertainty can potentially increase the robustness of the model. In this paper, a hybrid Deep Learning-Gaussian process method for DR diagnosis and uncertainty quantification is presented. This method combines the representational power of deep learning, with the ability to generalize from small datasets of Gaussian process models. The results show that uncertainty quantification in the predictions improves the interpretability of the method as a diagnostic support tool. The source code to replicate the experiments is publicly available at https://github.com/stoledoc/DLGP-DR-Diagnosis

1 Introduction↩︎

Diabetic Retinopathy (DR) is a consequence of Diabetes Mellitus that manifests itself in the alteration of vascular tissue. When an alteration in the correct blood supply occurs, lesions such as microaneurysms, hemorrhages and exudates appear [1]. These lesions can be identified in eye fundus images, one of the fastest and least invasive methods for DR diagnosing. Although early detection and monitoring are crucial to prevent progression and loss of vision [2], in developing countries approximately 40% of patients are not diagnosed due to lack of access to the medical equipment and specialist, which puts patients of productive age at risk of visual impairment [1], [3]. Therefore, to facilitate access to rapid diagnosis and speed up the work of professionals, many efforts have been made in the development of machine learning models focused on the analysis of eye fundus images for automatic DR detection.

For medical image analysis, deep Convolutional Neural Networks represent the state of the art. These methods work by means of filters that go through the image and exploit the natural structure of the data, being able to detect increasingly complex patterns. However, the success of these deep learning models depends on the availability of very large volumes of data, and this is not always the case for medical image datasets. For instance, one of the largest public-available image dataset for DR detection is EyePACS [4], which has 35126 samples for training. For this reason, training a deep learning model for this problem from scratch is not always feasible [5]. Instead, fine-tuning of pretrained models is preferred, as it allows the models to refine a general knowledge for an specific tasks. However, the number of specific sample images is not always enough to make a tuning that produces good final performances [5].

Classical machine learning methods such as Gaussian Processes (GP), on the other hand, were originally designed to work well with small data sets [6]. They have different advantages over deep neural network models, as lower number of parameters to train, convex optimization, modularity in model design, the possibility to involve domain knowledge, and in the case of Bayesian approaches, they allow the calculation of prediction uncertainty [7]. The latter would be useful in medical applications, as it gives to the final user an indication of the quality of the prediction [8].

This work presents and evaluates a hybrid deep learning-Gaussian process model for the diagnosis of DR, and prediction uncertainty quantification. Taking advantage of the representational power of deep learning, features were extracted using an Inception-V3 model, fine-tuned with EyePACS dataset. With these features we proceed to train a GP regression for DR grading.

Our framework shows that:

The performance of the proposed hybrid model trained as a regressor for the DR grade, allows it to improve binary classification results when compared with the single deep learning approach.

Gaussian processes can improve the performance of deep learning methods by leveraging their ability to learn good image representations, when applied for small datasets analysis.

The integration of GP endows the method with the ability to quantify the uncertainty in the predictions. This improves the usability of the method as a diagnostic support tool. Furthermore the experimental results show that the predictions uncertainty is higher for false negatives and false positives than for true positives and true negatives respectively. This is a high valued skill in computational medical applications.

The paper is organized as follows: Section 2 presents a brief review of the previous work related to the diagnosis and calculation of uncertainty of the of DR automatic classification. Section 3 introduce the theoretical framework for the experiments, which will be described in Section 4. Finally, in Section 5 the discussion of the results and conclusions are presented.

2 Related Work↩︎

Many approaches have been proposed for the DR binary detection, most of them based in deep neural networks [9]. Some of them combine deep models with metric learning techniques, as in [10], where an Inception-V3 is trained and embedded into siamese-like blocks. The final DR binary or grade prediction is given by a fully-connected layer. In [11], a customized deep convolutional neural network to extract features is presented. The features and multiple metadata related to the original fundus image are used to trained a gradient boosting classifier to perform the DR prediction. In [12] an Inception-V3 model is once again fine-tuned using a private set of eye fundus images, but not with binary labels, but with five DR grade labels. The results are reported using a subset of the Messidor-2 dataset [13], [14]. This makes performance comparison impossible with many other results, including those presented in this paper. Better results were reported by Gulshan et al. in [15], where an ensemble of ten Inception-V3 models, pretrained on ImageNet, are fine-tuned on a non-public eye fundus image dataset. The final classification is calculated as the linear average over the predictions of the ensemble. Results on Messidor-2 were reported, with a remarkable 99% AUC score. In [16], Voets et al. attempted to reproduce the results presented in [15], but it was not possible since the original study used non-public datasets for training. However, Voets et al. published the source code and models, and details on training procedure and hyperparameters are published in [16] and [17].

Regarding the estimation of predictive uncertainty, the first work in this matter in DR detection models was proposed in [8], where bayesian inference is used for uncertainty quantification in binary classification of DR. Another approach is presented in [18], where stochastic batch normalization is used to calculate the uncertainty of the prediction of a model for DR level intervals estimation. In the work presented in [19], a dataset with multiple labels given by different doctors for each patient is used, which allows the calculation of uncertainty to predict professional disagreement in a patient diagnosis.

In relation to convolutional neural networks uncertainty estimation using GP, some work has been done specially outside the DR automatic detection context, as in [20], where a framework is developed to estimate uncertainty in any pretrained standard neural network, by modelling the prediction residuals with a GP. This framework was applied to the IMDB dataset, for age estimation based in face images. Also in [21], a GP on the top of a neural networks is end-to-end trained, which makes the model much more robust to adversarial examples. This model was used for classification in the MNIST and CIFAR-10 datasets.

To our knowledge, this is the first work that implements a GP to quantify the uncertainty of a model predictions of DR diagnosis.

3 Deep Learning Gaussian Process For Diabetic Retinopathy Diagnosis (DLGP-DR)↩︎

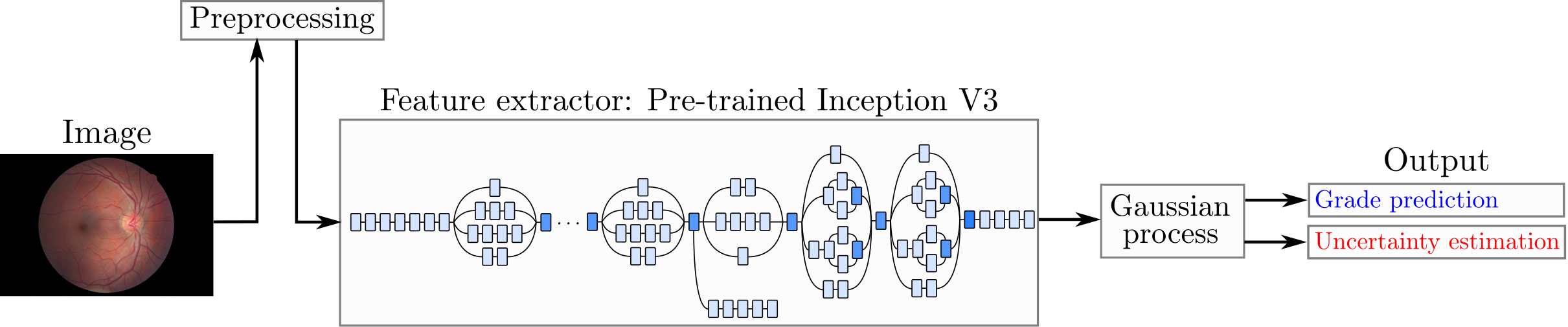

The overall strategy of the proposed Deep Learning Gaussian Process For Diabetic Retinopathy grade estimation (DLGP-DR) method comprises three phases, and is shown in Fig. 1. The first phase is a pre-processing stage, described in [16], which is applied to all eye fundus image datasets used in this work. This pre-processing eliminates the very dark images where the circular region of interest is not identified, eliminates the excess of black margin, and resizes the images to 299\(\times\)299 pixels. The second phase is a feature extraction. An Inception-V3 model, trained with ImageNet and fine-tuned with EyePACS dataset is used as feature extractor. Each sample is then represented by a 2048-dimensional vector. The third and final task is the DR diagnosis, which is performed by a GP regressor.

Figure 1: Proposed DLGP-DR model. Fine-tuned Inception-V3 is used as feature extractor. The extracted features are then used to train a Gaussian process.

3.1 Feature extraction - Inception-V3↩︎

Many previous works have used deep learning models for the diagnostic of DR. Recently, Voets et al. [16] attempted to replicate the results published in [15], by fine-tuning an assembly of ten pretrained Inception-V3 networks. While Voets et al. were not able to achieve the same results reported in [15], most of the implementation details, as well as the specific partitioning for the training and test sets are publicly accessible, and were used in this study in the fine tuning of an Inception-V3 model. Once trained, the feature extraction is achieved by defining the global average pooling layer of the network as the output of the model, and use it to predict all the images in the datasets. Thus, each image will be represented by 2048 features which are used to train and evaluate the GP model.

3.2 Gaussian Processes↩︎

Gaussian processes are a Bayesian machine learning regression approach that are able to produce, in addition to the predictions, a value of uncertainty about them [6]. The method requires as input a covariance given by a kernel matrix. The kernel matrix would be the gram matrix computed over the training set with a Radial Basis Function (RBF). This RBF kernel depends on two parameters which will be learned during the training process. We performed a Gaussian process regression, where the labels are the five grades of retinopathy present in the EyePACS dataset. From the prior, the GP calculates the probability distribution of all the functions that fit the data, adjusting the prior from the evidence, and optimizing the kernel parameters. Predictions are obtained by marginalizing the final learned Gaussian distribution, which in turn yields another normal distribution, whose mean is the value of the prediction, and its standard deviation gives a measure of the uncertainty of the prediction. Thus, an optimized metric (attached to a RBF similarity measure) is learned from the data, used to estimate the DR grade.

This GP can be adapted to do binary classification. One simple way to do this is defining a linear threshold in the prediction regression results. The standard way however, consist in training a GP with binary labels and filtering the output of the regression by a sigmoid function. This results in a Gaussian Process Classifier (GPC). In any case, the predictions of a GPC are not longer subject to a normal distribution, and the uncertainty can not be measured. Therefore, the GPC will not take part in this study.

4 Experimental evaluation↩︎

4.1 Datasets↩︎

Experiments were performed with two eye fundus image datasets: EyePACS and Messidor-2. EyePACS comes with labels for five grades of DR: grade 0 means no DR, 1, 2, and 3 means non-proliferative mild, moderate and severe DR, while grade 4 means proliferative DR. For the binary classification task, according to the International Clinical Diabetic Retinopathy Scale [22], grades 0 and 1 corresponds to non-referable DR, while grades 2, 3, and 4 correspond to referable DR. In order to achieve comparable results with [16], we took the same EyePACS partition used for training and testing (see Table 1). This partition was constructed only to ensure that the proportion of healthy and sick examples in training and testing was the same as that reported in [15]. EyePACS train set is used for training and validation of the Inception-V3 model. Then, the feature extraction described in Section 3 is applied. The extracted features are used for training the DLGP-DR model. The evaluation is performed on the EyePACS test set and on the Messidor-2, which is a standard dataset used to compare performance results in DR diagnosis task. Datasets details are described in Table 1 and in Table 2.

| Class | Test Samples | ||

|---|---|---|---|

| 0 | 1368 | ||

| 1 | 380 |

| Grade | Train Samples | Test Samples | |

|---|---|---|---|

| 0 | 37209 | 7407 | |

| 1 | 3479 | 689 | |

| 2 | 12873 | 0 | |

| 3 | 2046 | 0 | |

| 4 | 1220 | 694 |

4.2 Experimental Setup↩︎

Fine-tuning was made to an Inception-V3 network, pretrained on ImageNet and available in Keras [23]. The model was trained for binary DR classification task. The data augmentation configuration for horizontal reflection, brightness, saturation, hue, and contrast changes, is described in [17], and it is the same used in [16] and in [15]. The top layer of the Inception-V3 model is removed and replaced by two dense layers of 2048 and 1 neurons. BinaryCrossentropy was used as loss function and RMSprop as optimizer, with a learning rate of \(10^{-6}\) and a decay of \(4\times10^{-5}\). The performance of the model is validated by measuring the AUC in a validation set consisting of 20% of the training set.

Once the model is trained, the average pooling layer from the Inception-V3 model is then used as output for feature extraction. The extracted features from the Inception-V3 are normalized and used to train a GP regressor over the five DR grade labels, it means, to perform the DR grading task. Therefore, the output of the DLGP-DR is a continuous number indicating the DR grade.

Two baselines were defined to compare the DLGP-DR performance. Results reported by Voets es al. [16] constitute the first baseline of this study. The second baseline is an extension of the Inception-V3 model with two dense layers trained on the same feature test as the Gaussian process, which is called as NN-model hereafter.

4.3 EyePACS results↩︎

DLGP-DR is evaluated in the EyePACS test partition. The results are binarized using a threshold of 1.5 (which is coherent with referable DR detection), and compared with baselines in Table 3. In addition, although uncertainty estimation is not used to define or modify the prediction, DLGP-DR uncertainty is analysed for false positives (FP), false negatives (FN), true positives (TP) and true negatives (TN). As mentioned before, referable diabetic retinopathy is defined as the presence of moderate, severe and proliferative DR. So, the false negatives are calculated as the patients that belong to grade 4 but are classified as grades 0 and 1. The false positives are calculated as the patients belonging to grades 0 and 1 but classified in grade 4. The results are shown in Fig. 2 and Fig. [fig:std95fp95fn].

Figure 2: Standard deviation for samples predicted as negative (non-referable) instances by DLGP-DR. FN: false negatives, TN: true negatives.

4.4 Messidor-2 results↩︎

For Messidor-2 dataset, the predictions given by DLGP-DR are binarized using athe same threshold of 1.5 used for EyePACS. Based on the results of the uncertainty measured in the EyePACS test dataset, those samples predicted negative for which the standard deviation was higher than 0.84, were changed to positive. The results are reported and compared with the baselines in the Table 4.

| Description | Sensitivity | Specificity | AUC |

|---|---|---|---|

| Voets 2019 [16] | 0.906 | 0.847 | 0.951 |

| NN-model | 0.9207 | 0.85 | 0.9551 |

| DLGP-DR | 0.9323 | 0.9173 | 0.9769 |

| Description | Sensitivity | Specificity | AUC |

|---|---|---|---|

| Voets 2019 [16] | 0.818 | 0.712 | 0.853 |

| NN-model | 0.7368 | 0.8581 | 0.8753 |

| DLGP-DR | 0.7237 | 0.8625 | 0.8787 |

4.5 Discussion↩︎

Results reported in Table 3 shows that DLGP-DR outperforms specificity and AUC score of the NN-model and outperforms all the metrics reported by Voets et al. [16]. As observed in Table 4, DLGP-DR outperforms both baselines for specificity and AUC scores. Although Gulshan et al. have reported \(0.99\) for AUC score in Messidor-2 [15], as Voets et al. comments in [16], the gap in the results may be due to the fact that the training in that study was made with other publicly available images and with a different gradation made by ophthalmologists. Overall, this shows that the global performance of the DLGP-DR exceeds that of a neural network-based classifier. In addition, in Fig. 2 and Fig. [fig:std95fp95fn] the box-plot shows that the standard deviation is higher for false positives and false negatives. This means, that the DLGP-DR model has bigger uncertainties for wrong classified patients than for well classified. which provides the user a tool to identify wrong predictions. This behavior is especially visible for false negatives, which is the most dangerous mistake in medical applications, because a ill patient can leave out without a needed treatment.

5 Conclusions↩︎

In this study we took a deep learning model fine-tuned on the EyePACS dataset as feature extractor. The final task of DR classification and grading was carried out by means of a Gaussian process. For DR binary classification, the proposed DLGP-DR model reached better results than the original deep learning model. We also showed that a fine DR grade classification improve the binary classification performance of the original model.

Also, the DLGP-DR enables an uncertainty analysis. This analysis showed that the model could allow the identification of both, false negatives and false positives. The former are important due to the high cost of classifying a patient as healthy when it is not, and the later because they increase the costs of health care. The comparison between the Gaussian process and a neural network classifier for DR grades, showed once again that Gaussian processes are better tools for the analysis of medical images, for which datasets are usually far small to be analyzed entirely with deep learning techniques.

Overall, we demonstrate that the integration of deep learning and classical machine learning techniques is highly feasible in applications with small datasets, taking advantage of the representational power of deep learning and the theoretical robustness of classical methods.

Acknowledgments

This work was partially supported by a Google Research Award and by the Colciencias project number 1101-807-63563.